There is a particular kind of frustration that comes from a problem you cannot see, cannot touch, and cannot fix — but know with absolute certainty is real. For several months, my music kept stopping. Not constantly, not predictably, but often enough that every morning listening session carried a low-grade dread: is it going to skip today?

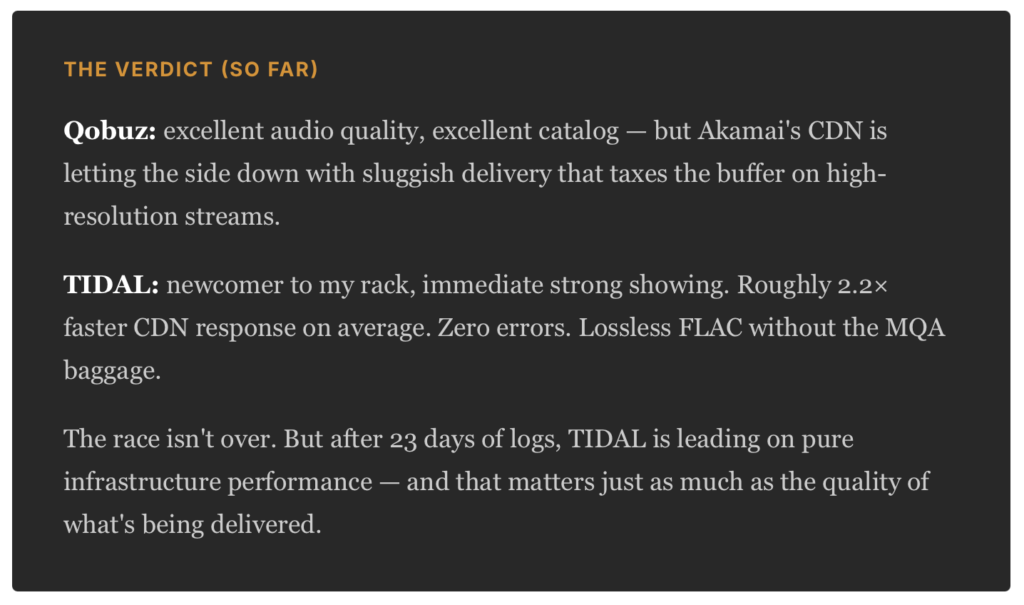

I’m going to tell you what I found. Some of it involves network diagnostics and log files; I’ll translate all of that into plain language. But the short version is this: I proved — with data — that the problem was never on my end. And then I cancelled my Qobuz subscription.

The Setup

Bear With Me, Non-Audiophiles

First, some context. Music matters to me. I’ve been playing piano for about a half century, and guitar for most of that time too. I grew up listening to high end audio equipment my father built. These days, I spend real money on my own audio equipment. My current listening chain runs from a Roon Server — essentially a dedicated music computer — through a Bluesound NODE, into my main system. Roon fetches hi-res audio from Qobuz, a streaming service that specializes in high-fidelity music files, and delivers it without any quality compromise.

Hi-res audio from Qobuz — think 24-bit, 192,000 samples per second — requires a reasonably fast and stable internet connection to stream properly. Not extraordinary, but consistent. A rough analogy: it’s like streaming a 4K movie. You don’t need a fiber connection, but you need one that doesn’t randomly drop to dial-up speeds for ten seconds at a time.

That is exactly what was happening.

The Clues

Roon keeps detailed logs of everything it does, including exactly how fast it’s downloading audio from the streaming service at any given moment. When the download speed falls below what’s needed to play a track without interruption, it logs a warning. When I started pulling those warnings out of my logs, the picture was striking.

March 28 – April 6, 2026

I began my first systematic analysis. Twenty log files. Thousands of streaming events. The pattern that emerged was so clean it almost looked manufactured.

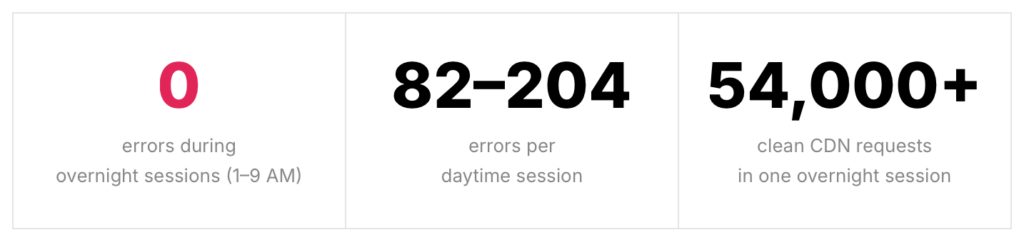

One log file covering over eight hours of overnight streaming — tens of thousands of audio data requests — recorded zero errors. The log file from the following afternoon recorded over a hundred. The server hadn’t changed. The internet connection hadn’t changed. The only variable was the clock on the wall.

In the IT world, this is called a time-of-day pattern, and it almost always means one thing: peak-hour congestion somewhere on the delivery network.

Writing to Qobuz

April 7, 2026

I wrote Qobuz a detailed support email — not a “my music keeps stopping please help” complaint, but a technical brief. I had run network path analysis, checked DNS resolution, verified my firewall and security configuration, and ruled out every local cause. I told them exactly what I had found: the problem was consistent with peak-hour congestion on the Miami-area Akamai server that delivers audio to Florida customers.

“Analysis of 20 Roon log files covering March 28th through April 6th reveals a clear and consistent pattern: sessions running overnight — approximately 1:00–9:00 AM ET — recorded zero streaming errors despite tens of thousands of active Qobuz CDN requests. Sessions running during daytime and evening hours recorded hundreds of failures each.”

— from my April 7th email to Qobuz Support

I also asked them to investigate the specific Akamai edge server — I had identified it by name: a1094.dscv.akamai.net, serving out of Miami — and to look at its performance during daytime peak hours for Florida customers.

If you really want to read the entire email message I sent, including all of the technical findings, you can see it here – just click the triangle at the beginning of this section.

Hello Qobuz Support,

I am a Qobuz subscriber located in Sarasota, FL and have been experiencing consistent streaming failures for several weeks. I have conducted extensive diagnostics this morning and wanted to share my findings in detail so your engineering team can investigate on the CDN side.

—

SYMPTOM

My streaming client (Roon Server 2.64 build 1646, running on Ubuntu Server 24.04) logs show repeated “UnrecoverableError” failures in Roon’s internal streaming cache (FTMSI-B) when attempting to fetch the initial data block (block 0) from your Akamai CDN. Errors have been logged hundreds of times per day across multiple weeks. Playback typically self-heals after one or two retries, but results in audible track skips.

—

DIAGNOSTICS COMPLETED

1. Network path analysis (MTR, 100 cycles)

Zero packet loss end-to-end. Final Akamai edge node (a23-205-165-70.deploy.static.akamaitechnologies.com) responds at a consistent 9-10ms with minimal jitter. The network path is completely clean.

2. DNS resolution

All three resolvers tested — local Unbound, Google (8.8.8.8), and Cloudflare (1.1.1.1) — consistently return identical IPs: 23.205.165.70 and 23.205.165.72 (a1094.dscv.akamai.net). DNS is functioning correctly and geo-routing me to the appropriate Miami-area edge node for my location.

3. Traffic shaping / QoS

No egress traffic shaping configured on the Roon Server host. Default fq_codel qdisc only.

4. Firewall / IDS

OPNsense with Suricata IPS — no Suricata alerts for Qobuz or Akamai traffic. Ruled out as a factor.

5. Roon version

Running current release (2.64 build 1646, updated April 1st). Errors predate this update, going back to at least March 28th — the oldest logs available.

—

KEY FINDING: STRONG TIME-OF-DAY PATTERN

Analysis of 20 Roon log files covering March 28th through April 6th reveals a clear and consistent pattern:

– Sessions running overnight (approximately 01:00-09:00 ET): ZERO streaming errors, despite tens of thousands of active Qobuz CDN requests

– Sessions running during daytime and evening hours: Hundreds of UnrecoverableError failures per session

Specific example: A log file covering April 3rd 01:13 through 09:34 (over 8 hours of active Qobuz streaming, 54,000+ CDN-related log entries) recorded zero errors. Log files covering daytime sessions on the same and adjacent days recorded 82-204 errors each.

Network conditions, DNS resolution, server configuration, and Roon version are identical between the clean overnight sessions and the error-filled daytime sessions. The only variable is time of day.

This pattern is strongly consistent with peak-hour congestion or capacity constraints on the Miami-area Akamai edge node (a1094.dscv.akamai.net, 23.205.165.70/72) that serves Florida customers.

—

REQUEST

I would ask that your engineering or CDN operations team investigate the capacity and performance of the a1094.dscv.akamai.net edge node during peak daytime hours (approximately 09:00-23:00 ET), specifically for initial block (block 0) fetch latency and timeout rates for Florida-region customers.

I am happy to provide full Roon log excerpts or any additional diagnostic output if it would help your investigation.

Thank you for your attention to this.

Best regards,

Steve Haney

steve@thehaneys.net

April 11, 2026

Qobuz replied. They acknowledged the problem and gave me an explanation I found credible: a caching anomaly in their content delivery network. In some cases, certain servers were delivering incomplete or corrupted audio files. They said they’d performed a cache purge — essentially clearing the bad data from their servers so fresh copies would be fetched — and asked if things had improved.

“Our teams have identified the source of the problem. It is related to a caching anomaly within our content delivery network (CDN). In some cases, certain servers may temporarily deliver incomplete or corrupted audio files.”

— from Qobuz Support

April 12, 2026

A follow-up arrived. Jesse told me the ticket had been marked closed but that he was personally monitoring it and would follow up once the team had more information. He asked if I’d be willing to do another log analysis to see whether things had improved.

I said yes. I would run another analysis and report back.

That was the last I heard from Qobuz.

The Second Investigation

April 17 – May 10, 2026

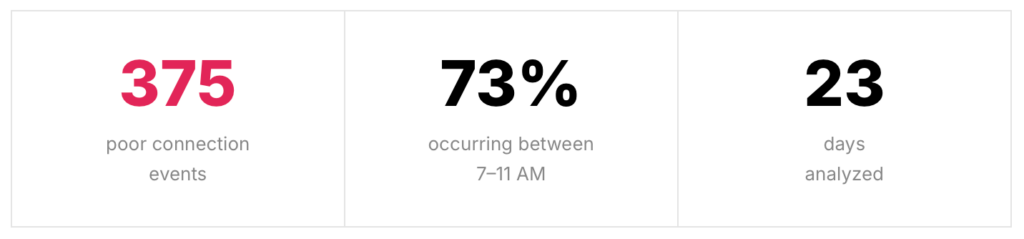

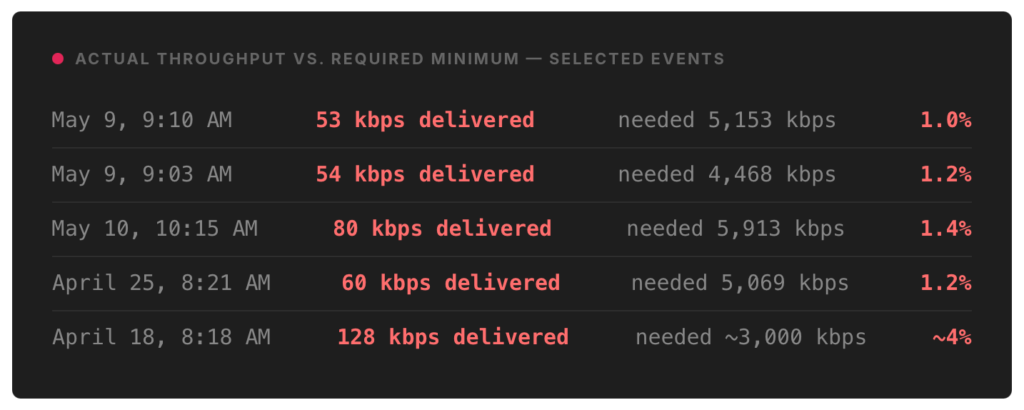

I analyzed 21 additional log files covering 23 days of listening — approximately 130 megabytes of data, around 450,000 lines of logs. The findings were not encouraging.

The time-of-day pattern from my first investigation had not improved — it had, if anything, sharpened. Nearly three-quarters of all failures occurred in the morning window between 7 and 11 AM. The cache purge had not fixed the underlying problem.

How bad were the drops?

To play 24-bit hi-res audio without interruption, Roon needs a sustained download speed from Qobuz’s servers. Think of it as a floor the connection needs to stay above. Here’s what I was actually receiving:

For Non-Audiophiles

To put those percentages in context: if you ordered a glass of water and received a thimble, the restaurant would be delivering roughly the same proportion of what you asked for. 84 individual events across 23 days showed throughput under 200 kbps. Some of these lasted five to ten minutes at a stretch.

The Smoking Gun

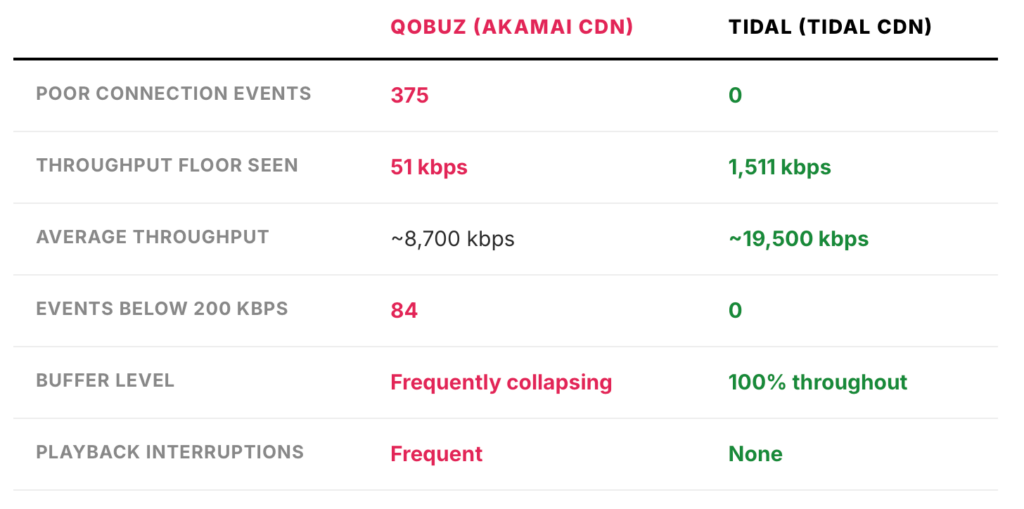

Good detectives don’t just collect evidence of the crime — they find the comparison that makes the evidence undeniable. Here’s mine.

Around the time of my second analysis, I added TIDAL as a secondary streaming service alongside Qobuz. TIDAL also offers hi-res audio. Same Roon Server. Same internet connection. Same Bluesound NODE. Same house, same morning, same cup of coffee.

Both services were streaming 24-bit hi-res audio to the same device, over the same connection, through the same firewall. The sole difference was the company delivering the audio.

This comparison eliminates every possible local explanation — my router, my ISP, my Roon configuration, my Bluesound endpoint — all in one stroke. If any of those were the cause, TIDAL would suffer the same failures. It doesn’t. The only thing that differs is whose servers are delivering the audio. Qobuz uses Akamai. TIDAL uses its own infrastructure. Akamai struggles in my region during morning hours. TIDAL does not.

Why it Matters – and Why I’m Sharing It

If you’re a normal person who just wants music to play, none of the kilobits-per-second stuff matters to you directly. What matters is this: your streaming service may be failing you, and you’d never know it unless you investigated. The music just… stops. Or skips. And the easiest explanation — the one you’re most likely to assume — is that your internet connection had a hiccup. Maybe it did. But maybe it didn’t.

If you’re a Qobuz subscriber in Florida, or anywhere in the southeastern United States, and you’ve been experiencing morning skips and dropouts: it’s worth knowing that this was a documented, ongoing infrastructure problem with Qobuz’s Akamai CDN. It wassn’t your router. It wasn’t your ISP. It wasn’t your streaming app.

And if you’re an audiophile wondering whether TIDAL’s hi-res tier holds up under real-world conditions: in my experience, measured over dozens of sessions on the same hardware, it absolutely does. The contrast could not have been more stark.

As for me — I’ve moved on. The music plays now, every morning, without drama. Sometimes the best diagnostic result is knowing exactly which variable to change.

Steve Haney is a Sarasota-based IT consultant, and reluctant audiophile who apparently reads server logs for fun. His Roon server runs on Ubuntu 24.04. The Bluesound NODE has no idea how closely it’s being monitored.